Forecasting AI Policy in 2026

Fathom’s Top Ten Predictions for the New Year

The past year has been nothing short of transformative for artificial intelligence. AI transitioned from a curiosity that struggled to complete basic math or even count to an agentic tool that can perform a significant amount of economically valuable tasks as well or better than a human. Surging frontier capabilities set off a geostrategic AI race, too: less than a year ago, DeepSeek released its R1 model, sparking concerns of a so-called Sputnik moment in AI and setting the tone for a year that would be defined by the global race to AI.

We are confident that 2026 will be just as turbulent (and exciting) as 2025. AI will go mainstream, becoming more integrated not only into our daily lives and workplaces but also our politics. Policy frameworks will multiply and collide within and across borders. Communities will begin to encounter actual harms but also real benefits from this technology. Frontier capabilities will march ahead, gobbling up compute that powers the economy and potentially threatens a market reckoning.

In our final post of 2025, we look to the year ahead with our top ten predictions for what 2026 will bring for AI policy.

1. The First AI Wrongful Death Verdict

In April 2025, 16-year-old Adam Raine of California killed himself at the urging of ChatGPT. The resulting lawsuit alleged that OpenAI’s chatbot normalized self-harm, escalated to detailed guidance on how to commit self-harm, prioritized session length over safety, and never stopped its behavior even in the face of obvious risk signals. When Adam told ChatGPT that he wanted to leave his noose out so that someone would find it “and try to stop me,” the chatbot told him to put it away.

The Adam Raine case – along with other lawsuits, including another relating to the suicide of a fourteen-year-old boy and the exposure of minors to material encouraging self-harm and violence – brought the potential harms of AI from the theoretical realm into the real world. Studies suggest that such harms may become more common: according to Common Sense Media, nearly three in four teens have used companion chatbots, with one in three regularly using AI companions for social relationships including romantic interactions, emotional support, and friendship.

We think that 2026 will see a raft of similar lawsuits alleging developer or deployer liability for harms related to self-harm and violence from chatbots, with at least one resulting in an AI wrongful death verdict. At Fathom, we have long said that AI is a tort nightmare waiting to happen. Without a standard of care defined upfront by experts, it will increasingly fall to the courts to determine liability. And regardless of whether the AI company should be held liable, without a system to determine whether a heightened standard of care has been met, litigation will remain the primary mechanism by which society attempts to impose accountability on AI companies.

2. Rise of AI Super PACs and AI’s First $100M Election

2025 saw the emergence of the first AI Super PAC, backed with $100 million in initial funding. In hindsight, it is surprising that it took three years after the birth of generative AI – and hundreds of billions of dollars spent on compute – before the frontier labs looked to campaign finance law to help shape their fortunes. In the final months of 2025, that super PAC led a $10 million campaign to push Congress to launch a federal AI framework, targeted its first congressional candidate, and inspired the formation of additional super PAC efforts.

2025 is just the beginning. Next year, we expect to see the emergence of dedicated AI Super PACs, with individual organizations or coalitions spending $100 million or more to influence candidate selection, ballot initiatives, and electoral outcomes. These PACs will target specific issues, such as data center approvals, chip manufacturing incentives, AI regulation, and antitrust enforcement – all issues that will increasingly become major political issues. However, we expect that less well-funded populist efforts, particularly around NIMBY issues such as data center construction, will challenge the success of some of these multi-million dollar campaigns. Labs would do well to spend on campaigns and messaging to convince local communities of the benefits of data centers and AI applications.

3. Rise in Deepfakes Causes an International Incident – and Response

2025 was a watershed year for deepfake creation. The number of deepfakes has increased to millions of incidents per year, and their sophistication – and the difficulty of identifying and preventing them – have risen. This year, several cases of deepfake videos impersonating major figures, including Secretary of State Marco Rubio and former Israeli Defense Minister Yoav Gallant, as well as videos depicting misinformation in conflict zones, have made headlines.

It is inevitable that one of these deepfakes causes an international incident. Voice clones can be created using just 20 to 30 seconds of audio, and be made in just minutes. OpenAI’s Sora, Google’s Nano Banana, and other AI tools make deepfake creation all too easy. No longer can experts, let alone normal citizens, easily tell what is real or fake. In our volatile world, it is not hard to imagine a deepfake incident in a warzone, election context, or other high-valence circumstance, leading to actual action and damage happening in the real world. Defense departments will need to incorporate additional measures to ensure that a convincing deepfake (showing a head of state ordering a first strike or declaring war, e.g.) does not lead to rapid escalation before verification is possible.

The good news is that much of the world has acknowledged the dangers of deepfakes and is acting. In the United States, 47 states have enacted deepfake laws; the federal government did so earlier this year. Another dozen or two jurisdictions, including the EU, China, South Korea, and the UK, have also banned deepfakes. India in particular has moved aggressively against deepfakes, passing legislation that, among other measures, requires platforms to label AI-generated content and take down deepfakes within 3 hours of reporting.

An international incident will lead all of these countries to strengthen their laws and come together around shared global deepfake detection and prevention measures (standards for synthetic media labeling and detection, liability frameworks for platforms hosting deepfakes, criminal penalties, e.g.). If there is going to be a global accord on AI, it will mostly likely center on this low-hanging policy fruit.

4. Federal Preemption Drags AI Policy into Hyperpartisan Territory

After a year of fits and starts, the Administration in December issued its federal preemption executive order. The basic tenets of the EO are clear – a litigation task force will be empowered to sue states over burdensome AI laws, the FCC will be charged with developing federal AI reporting standards, broadband funding will be withheld from states with “onerous” AI regulation. But its consequences are far less obvious.

The EO definitively kicks off the debate between the appropriate role of state and federal government in AI policy. We expect to see a wave of lawsuits across the country, as the Administration sues states like Colorado, California, and New York over their state-level regulations, and the states countersue. We think most – nearly all – of the Administration’s court challenges will lose, given the legal flimsiness of relying on the dormant commerce clause in this context. Fully aware that they hold the legal upper hand and have public sentiment on their side, these blue states will continue to regulate and enforce their AI rules. But we expect to see a chilling effect in the red states: denouncing preemption is far harder to do when the Administration has threatened to go after you with a lawsuit and cuts to your budget.

The primary effect of this chilling effect in Republican-controlled states is that we will finally see a more traditional political bifurcation in AI states, as red states lean away from regulation (outside of that touching consensus areas such as kids’ safety and deepfakes) and blue states lean into regulation. The result of more politicization will be more uncertainty and lower adoption rates, none of which is good for AI policy or America’s AI leadership.

5. AI Coding Makes Senior Engineers More Valuable

Americans are worried about AI taking their jobs – and this rings particularly true for tech workers. In Fathom’s latest polling, we found that 49% of Americans believe AI will cause significant job displacement. Technology jobs rank among the top concerns, with 25% of respondents specifically worried about AI’s impact on the sector.

These rising concerns are particularly justified in software development. In 2025, AI-assisted coding went mainstream. According to Stack Overflow, 84% of developers now use AI coding tools, and GitHub reports that 46% of new code from Copilot users is AI-generated and unmodified.The offer is enticing: faster development, lower costs, democratized software creation.

However, the reality is more complicated. A July 2025 study by METR found that experienced open-source developers were actually 19% slower when vibe coding or using AI coding assistants, and spent more time reviewing, debugging, and correcting AI-generated code than would have been saved by creating their own code. However, there is a bifurcation: senior developers ship AI-generated code at 2.5x the rate of junior ones, while also being the most skeptical of it. This distrust is precisely what makes them effective: they know what broken, AI-generated code looks like. The result is a widening skills gap – and the early data is alarming. A recent Stanford study found that employment among software developers aged 22 to 25 fell nearly 20% between 2022 and 2025, coinciding with the rise of AI coding tools.

We expect this trend to accelerate in 2026. Entry-level coding jobs will contract as companies realize that AI tools require experienced oversight to be productive. Government tech hiring programs aimed at creating pathways into the industry will struggle. This mirrors a broader pattern: AI is hollowing out the middle of industries, eliminating the apprenticeship roles that once built expertise while increasing demand for those who already have it.

6. Trust Becomes the New Oil

In the early years of AI, the frontier labs were largely distinguished in the public eye by “vibe” – mainstream users liked GPT, more safety-minded folks liked Claude, MAGA-supporting young men loved Grok. Now, they will be distinguished primarily by business model.

Every frontier lab wants to win the AI race. But consensus on what that means – and how to get there – has split. Anthropic has staked out a path based on enterprise customers and excellence in coding and writing services. OpenAI has taken the playbook used by social media: gobble up all the customers, wherever they are, and make money later. After some hesitation, Google has followed suit, trying to take over the market while undercutting OpenAI.

What is the better business model? We continue to believe that companies that prioritize trust will ultimately outperform those chasing downloads. Anthropic has smartly carved out a reputation for its commitment to trust and safety efforts and to creating a product that mainstream enterprises, with mainstream concerns around data security, privacy, and safety, can trust. When the harms from AI inevitably begin dominating headlines, consumers and business users alike will flock to options that they believe are safe, accountable, and worthy of their trust.

7. AI Enters the Lab – With China at the Forefront

About a year after the advent of generative AI, Insilico Medicine, a Hong Kong-based biotech company, made headlines when it announced that the first AI-designed drug, Rentosertib, had entered clinical trials. As of December, the drug is in Phase II Trials in the U.S. This was a watershed moment: the drug, designed to target Idiopathic Pulmonary Fibrosis (IPF), a severe, extremely painful, and largely untreatable chronic lung disease that affects millions of people worldwide. Using traditional drug discovery methods, Insilico estimated that discovery of the IPF drug would have taken years and cost hundreds of millions; with generative AI, the company reached the first phase of clinical trials in only two and a half years at a fraction of the cost.

Insilico’s lung drug is just the beginning. China is becoming a world-leading biotechnology innovator and is increasingly using generative AI to accelerate its drug discovery efforts. Recognizing Beijing’s strength in bringing AI to the lab, Western biopharma companies have inked billions of dollars worth of deals with Chinese companies. China’s emerging AIxBiotech leadership raises real concerns for the United States, including for our economic dominance and for our national security. Next year, AI’s applications in the lab will go commercial in a big way. The outstanding question is who will dominate: the United States or China.

8. It’s the Economy, Stupid

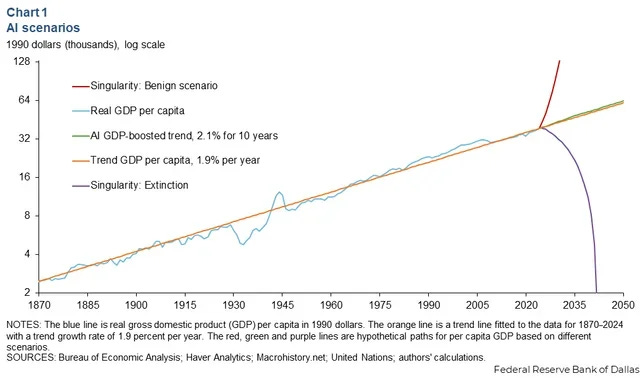

This fall, the Dallas Fed put out a chart that neatly summed up the debate about the future of AI on the economy: we’ll see unlimited abundance, full human extinction, or marginal economic gains.

Easy as it was to make fun of just how wide a net the Fed cast, its forecast speaks to a profound confusion about how to understand the AI economy. Will the labs develop so-called “God AI” that solves all our problems? Are the hundreds of billions of dollars being poured into compute and AI infrastructure just the modern version of the Dutch Tulip bubble? Or is it more like the dot com bust, where there were major losers but also major winners, with real value (including concrete goods, like fiber optic cables) created? Un this case, is actual value being created, if all these companies keep investing in, and moving money between, each other?

In a world with trade wars, whispers of stagflation, and an ongoing fight between the President and the Fed Chairman, AI will be the biggest economic question mark of 2026. If AI doesn’t start to deliver real output – not just stock gains or investor benefits, but real economic output – there is a very real chance that we’ll see a major contraction as major market players begin to fear the AI emperor has no clothes. On the other hand, real AI economic output will spur fears of job loss which will nearly certainly lead to a populist anti-big tech backlash. So no matter what impact AI has on the economy, expect it to be one of the top stories of 2026.

9. The Trust Gap Increases

Fathom’s second annual survey of American attitudes toward AI revealed a remarkable finding: Americans have embraced AI with great speed, yet remain deeply skeptical about where it’s taking us. Nearly 70% have now used AI, with half engaging daily or weekly – one of the fastest adoption curves in recent history. AI achieved widespread usage in three years, while personal computers took nearly twelve. Yet when it comes to optimism, Americans rank near the bottom globally.

Multiple surveys confirm this skepticism is uniquely American. Pew Research Center‘s survey of 25 nations found that 50% of Americans are more concerned than excited about AI’s increased use in daily life – the highest concern level of any country surveyed. The UK (39%), France (35%), and Germany (29%) all show notably less anxiety. A separate Google and Ipsos survey reveals the gap even more starkly: asked whether AI would benefit “people like me,” only 44% of Americans agreed – compared to 72% in Singapore and 95% in Nigeria. This skepticism persists despite America attracting nearly nine times more private AI investment than Europe and the UK combined in 2024 – because the public doesn’t trust that the technologies will improve their lives.

We predict this trust gap will widen in 2026. Americans will continue adopting AI for work and for convenience, as opting out becomes increasingly difficult. That adoption will be accompanied by growing unease, not confidence, as headlines about scams, harms, and job losses accumulate. Our polling shows a path forward: Americans trust independent experts to build the oversight frameworks that traditional institutions have failed to deliver. The window for establishing legitimate, adaptive governance that earns public confidence – rather than assuming it – remains open. Whether that window stays open depends on action in the year ahead.

10. China Asserts Chip Self-Sufficiency

Just last week, Reuters reported that China had successfully reverse engineered a prototype extreme ultraviolet (EUV) lithography system. If true, of course, China is one major step closer to a domestic chip industry capable of powering an AI boom of their own. However, there is good reason to believe that this story is largely over-blown. A better description, as our friends at CSIS’s Wadhwani Center and CFR suggest, is that China has assembled an EUV machine – most of the parts are actually parts from the same supply chain suppliers that ASML uses. This is more likely a story about export control evasion as it is one of successful Chinese chipmaking indigenization. Replicating a machine from an ASML copy is very different in practice than developing a machine that can produce chips at scale. Even ASML struggled to commercialize its EUV machinery for at least a decade after it built a working prototype.

China will continue to publish and promote stories about the success of its chipmaking indigenization efforts in an attempt to roll back export controls. That’s not to say Chinese is not aggressively pursuing such efforts – China 2025 explicitly pushes for indigenous EUV development – but it should be taken as a sign that America continues to dominate the semiconductor supply chain. While the Administration has waffled on export controls, most recently approving the export of advanced AI chips to China, Congress (on a bipartisan basis) has been extremely clear about the need to not make China’s journey to chip self-sufficiency any easier. Over the next year, expect more attempts from Beijing to justify rolling back export controls – and expect Congress to continue to push back.

Conclusion

Next year, AI policy will democratize in a very real sense. Courts, citizens, insurers, international bodies, and markets – alongside legislators and traditional regulators – will all take a leading role in deciding how and to what ends this technology is used. This transition will be turbulent but will bring immense opportunities for those who care about shaping the future of AI.

If Fathom’s mission – to help navigate the AI transition, and to build a global architecture for the AI century, excites you, we encourage you to reach out or to consider joining us.

As always, we appreciate your partnership and support. And we wish you a wonderful holiday season and New Year.

Onward,

Andrew

The 'end of software engineering' takes are dramatic. The skills are changing, sure. But architecture, system design, reliability - those don't go away. They become more important. https://thoughts.jock.pl/p/wiz-personal-ai-agent-claude-code-2026