Americans are Using AI More Than Ever – Why is AI Anxiety Rising Too?

Insights from Fathom’s Latest Polling and Focus Groups

Artificial Intelligence (AI) has become so ubiquitous in our daily lives that it can be hard to believe it’s only been three years since ChatGPT brought generative AI into our public discourse. AI has quietly woven itself into how we work, create, learn, and make decisions – often so seamlessly that people barely notice when they’re using it. As individuals, we experience its benefits in real time; as a society, we are grappling with legitimate questions about its implications for our jobs and the workforce, the quality and role of education, and how we relate to each other.

Fathom just completed our second annual survey of American attitudes toward AI (building on our 2024 report AI at the Crossroads), and what we found is remarkable: Americans have embraced AI with great speed, yet the public remains extremely skeptical about where it’s taking us.

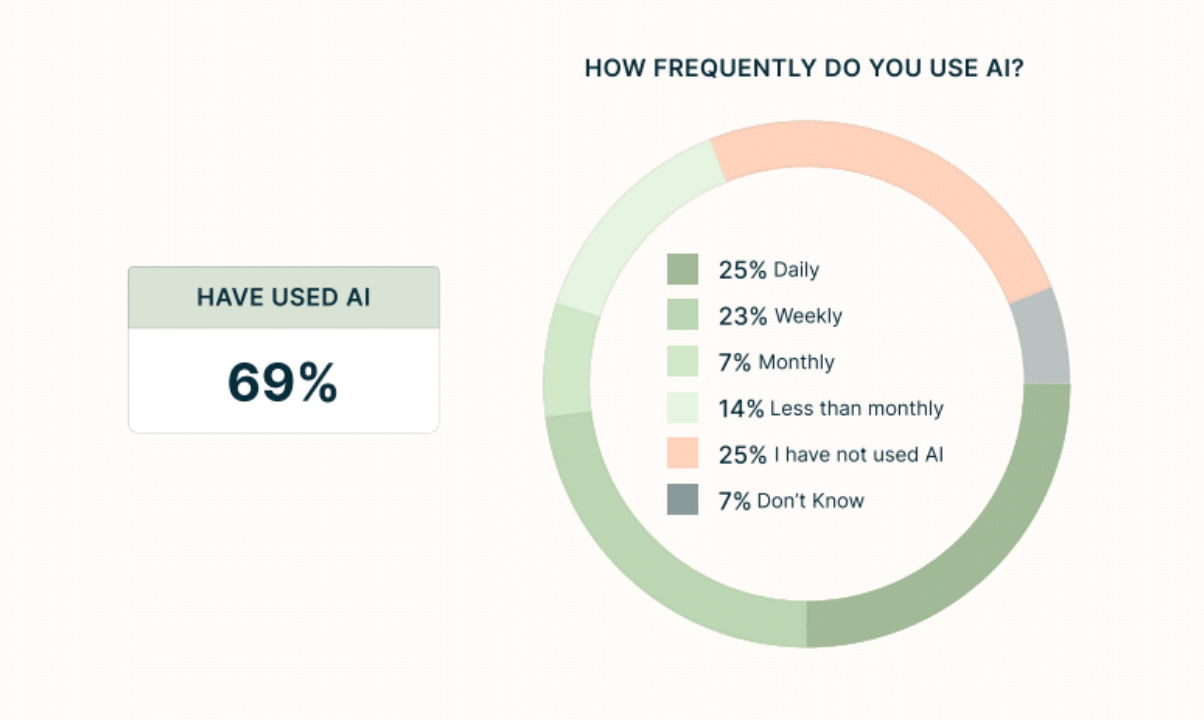

Nearly 70% of Americans have now used AI, with nearly half of users engaging with it daily or weekly. This represents one of the fastest adoption curves in recent history. AI achieved these levels of widespread usage in three years; personal computers took nearly twelve. Yet when it comes to AI optimism, Americans rank near the bottom globally.

Multiple surveys confirm that this skepticism is uniquely American. Pew Research Center’s survey of 25 nations found that 50% of Americans are more concerned than excited about AI’s increased use in daily life – the highest concern level of any country surveyed. The UK (39% concerned), France (35%), and Germany (29%) all show notably less anxiety about AI than Americans do.

A separate Google and Ipsos survey on AI attitudes around the world reveals the enthusiasm gap even more starkly. When asked whether AI would benefit “people like me,” only 44% of Americans agreed – compared to 72% in Singapore and a striking 95% in Nigeria. We trail behind technological powerhouses like South Korea (62%) and other advanced industrial economies in the West such as Germany and the Netherlands (both around 50%), but also emerging economies across Latin America and Southeast Asia: from Mexico (75%) to India (76%) to Brazil (69%). This skepticism persists despite America attracting six times more private AI investment than Europe and the UK combined.

To understand this tension, we surveyed 2,036 Americans and conducted focus groups across the political spectrum in California and Ohio, asking people directly about how they use AI and what concerns them most.

What we found is a disconnect that provides a window into how our society is grappling with transformative technology. In this post we unpack why adoption is so high while excitement is so tempered – and what that means for U.S. policymaking.

How Americans Adopted AI

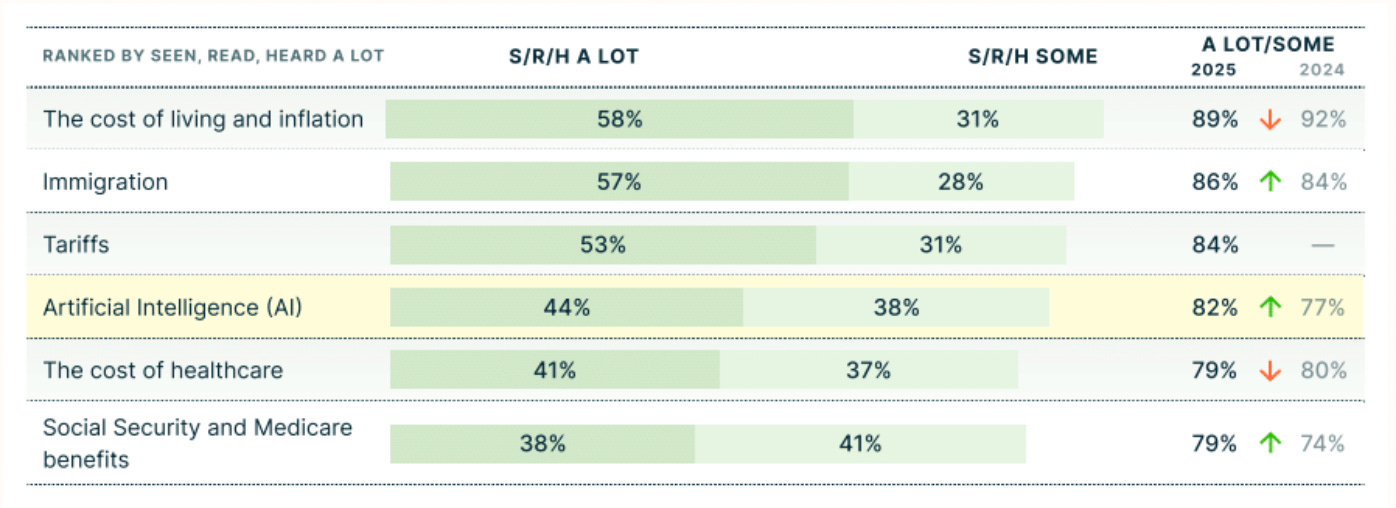

Our polling reveals that AI awareness has reached 82% of the American public. In just the past year, since our last poll, AI went from tech industry buzzword to kitchen table conversation.

Awareness only tells part of the story. What’s striking is how deeply AI has embedded itself in daily life – and how quickly. Nearly seven in ten Americans now report having used AI. A full quarter use it daily.

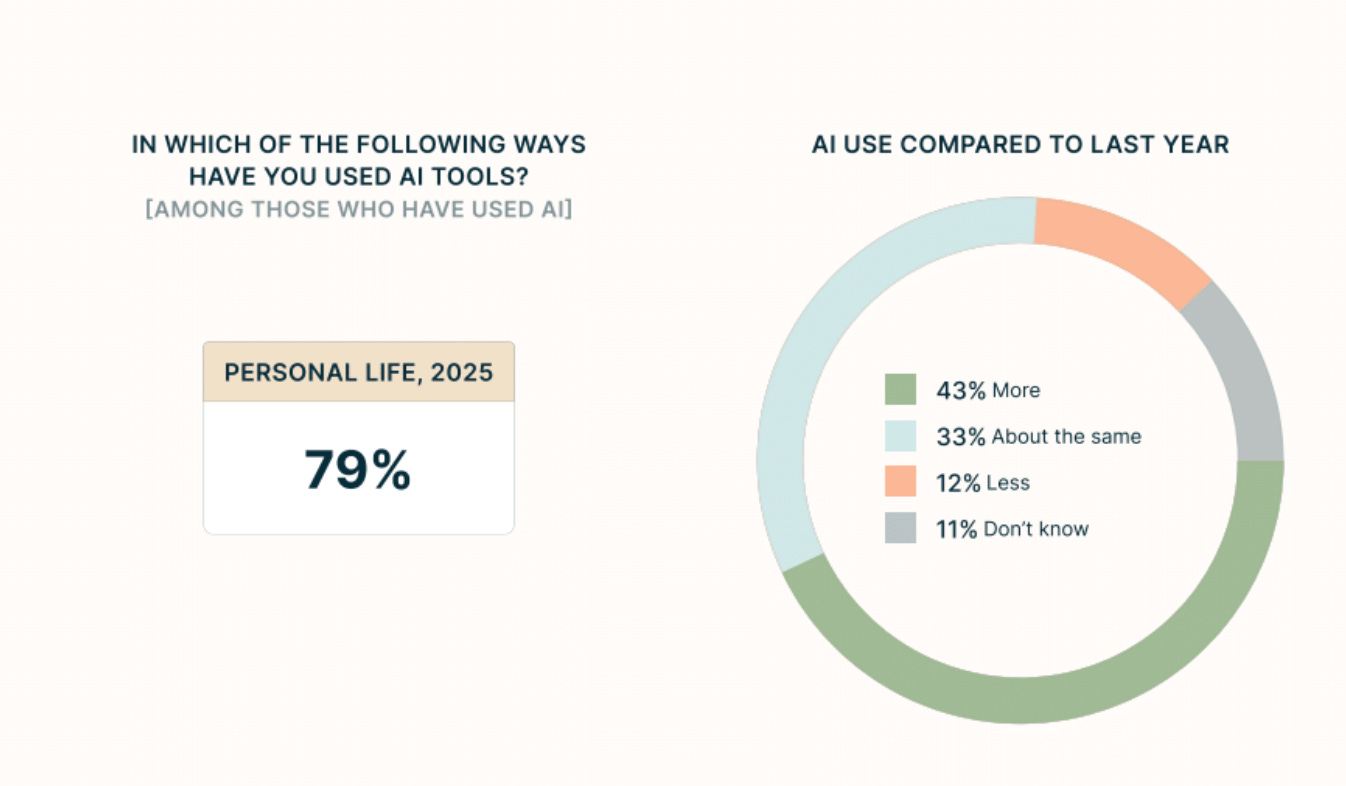

And adoption looks set to grow: 43% of Americans report using AI more this year than last.

The more revealing shift is where that utility manifests itself: not just in our workplaces, but in our personal lives. Among people who use AI, nearly 80% have integrated it into their daily routines outside of work – nearly double the 42% who report using it professionally.

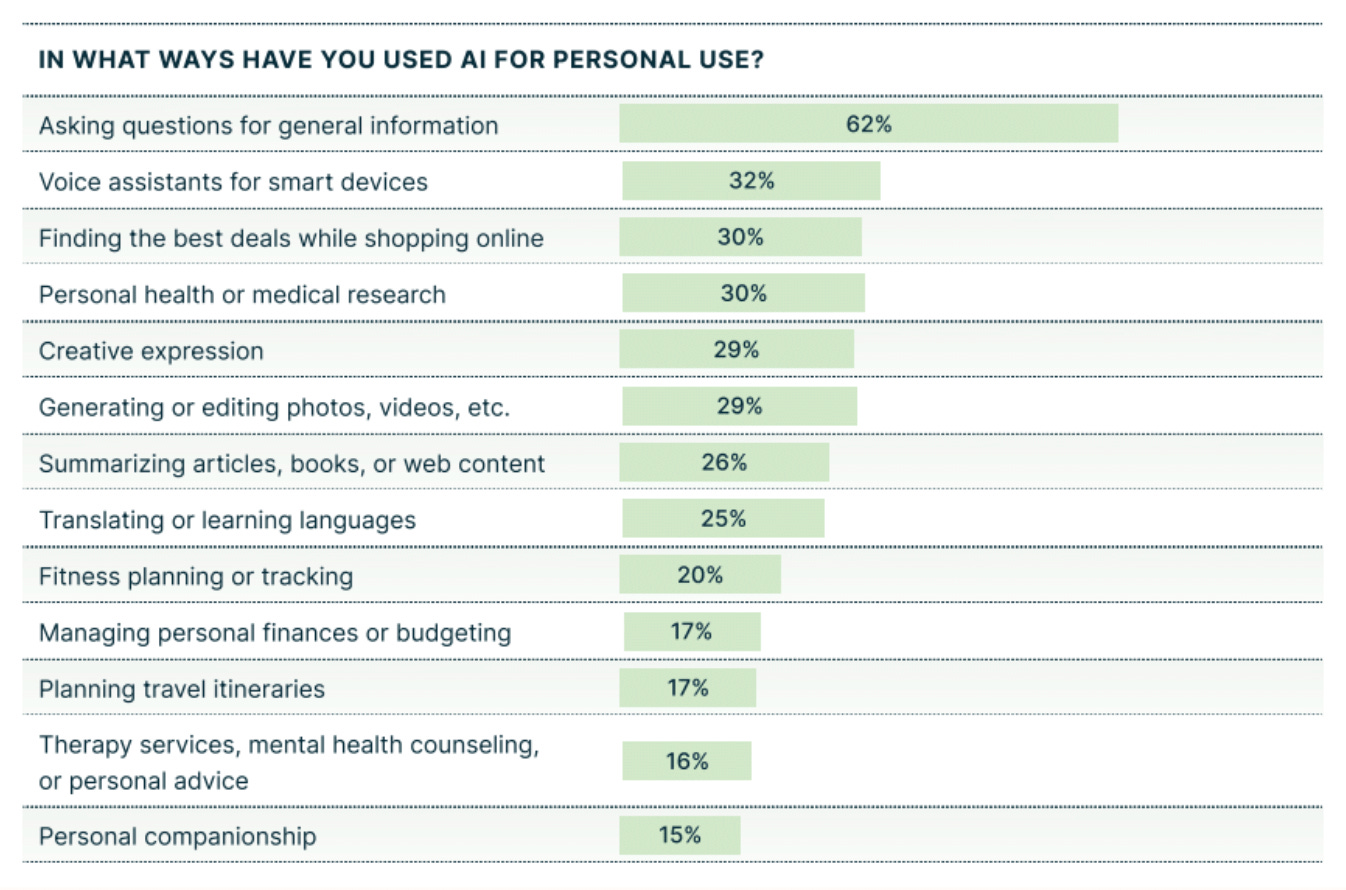

The picture is clear: nearly everyone is using AI to some degree. But if we dig deeper, we see that people are not using AI for radically new applications: 62% use it for asking questions and getting information, essentially treating it as a supercharged search engine.

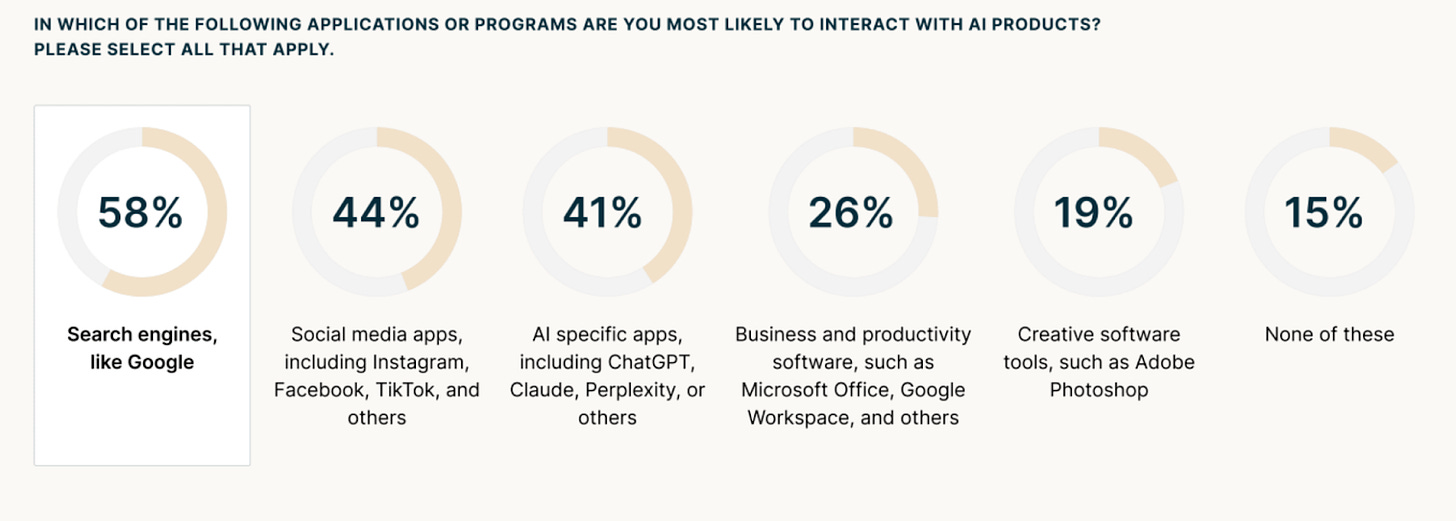

A lot of this use comes from a nearly invisible integration of AI into other technologies. When we asked people where they interact with AI, 58% said through search engines like Google. Another 44% encounter it on social media. Only 41% deliberately open ChatGPT, Claude, or similar tools.

The integration has been so seamless that many users may not even recognize when they’re using AI-powered features. It’s just part of how technology works now.

Why Adoption Has Bred Excitement – But Also Ambivalence

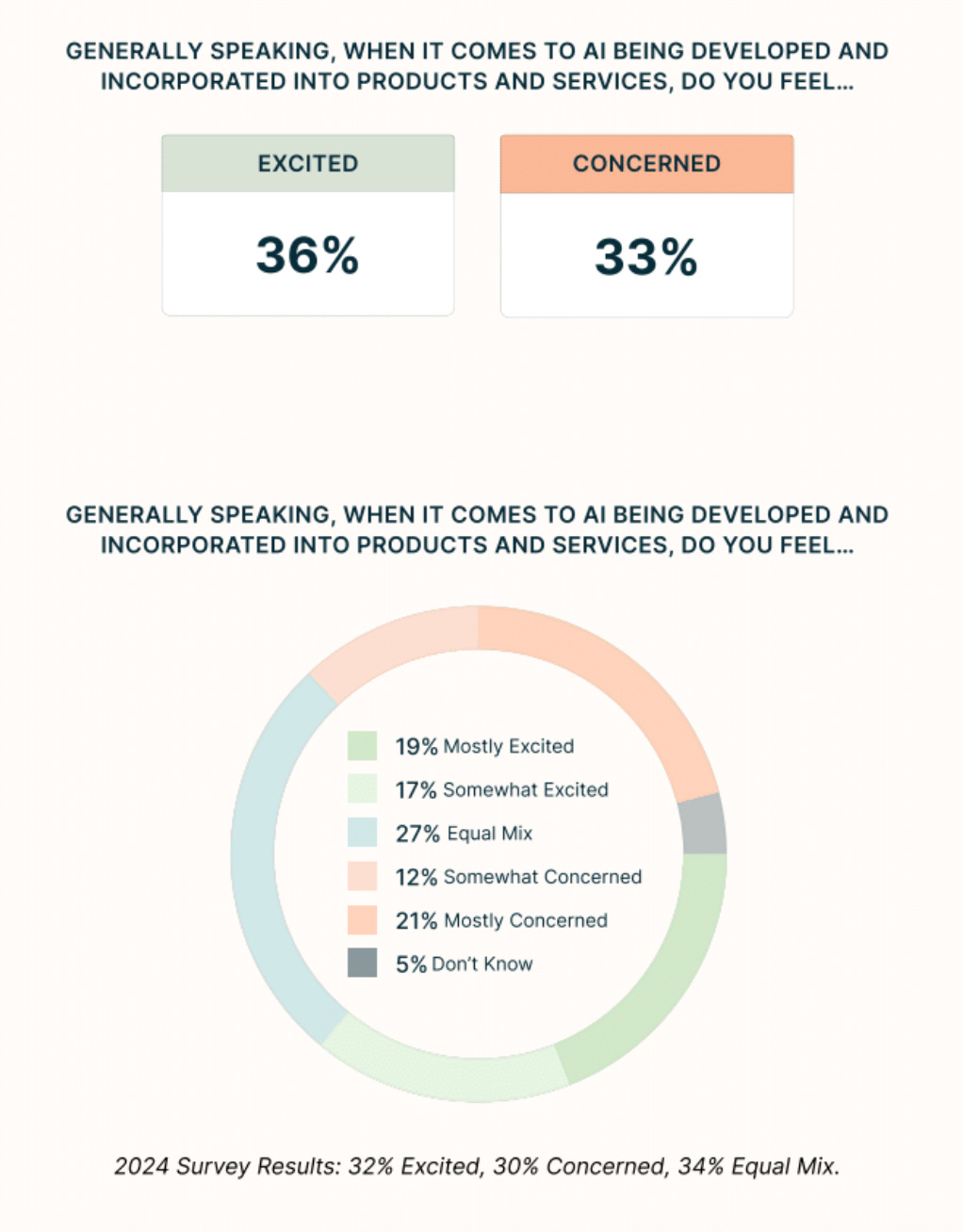

Americans are getting more excited about AI – but only marginally. Over the past year, public excitement has climbed from 32% to 36%, while concern rose from 30% to 33%. If you just look at the numbers, optimism is winning. But the gap is narrow. And what’s perhaps most revealing: 27% of Americans sit squarely in the middle, equally excited and concerned.

This isn’t the typical American response to transformative technology. For example, when smartphones arrived, 72% of early adopters described them positively, with only 16% expressing negative sentiment – adoption and enthusiasm moved together. With AI, we’re seeing something different: rising adoption, rising excitement, but also rising questions about what it means.

This tension manifests across multiple sectors and applications. People are excited about AI’s potential to boost productivity, accelerate scientific breakthroughs, and speed up healthcare innovation. At the same time, many expressed concerns about job displacement, data privacy, and the erosion of critical thinking skills. In this section, we focus on two areas where these concerns are particularly acute: employment and education.

The Five-to-Ten-Year Question: How Will AI Affect the Job Market?

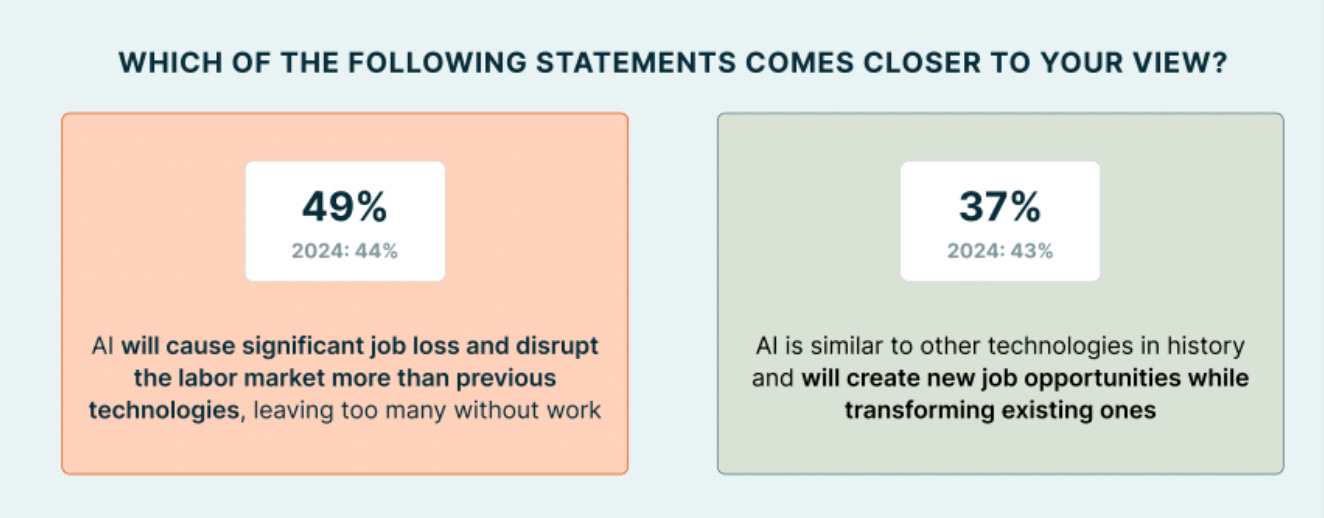

On the employment front, nearly half of Americans (49%) believe AI will cause significant job displacement. Only 37% believe it will create new opportunities while transforming existing roles. This is a major shift from the year before, when the public was split on the future impact of AI on jobs.

Interestingly, concern about job loss is highest among those who haven’t adopted AI yet. But even daily users recognize AI’s potential to reshape labor markets.

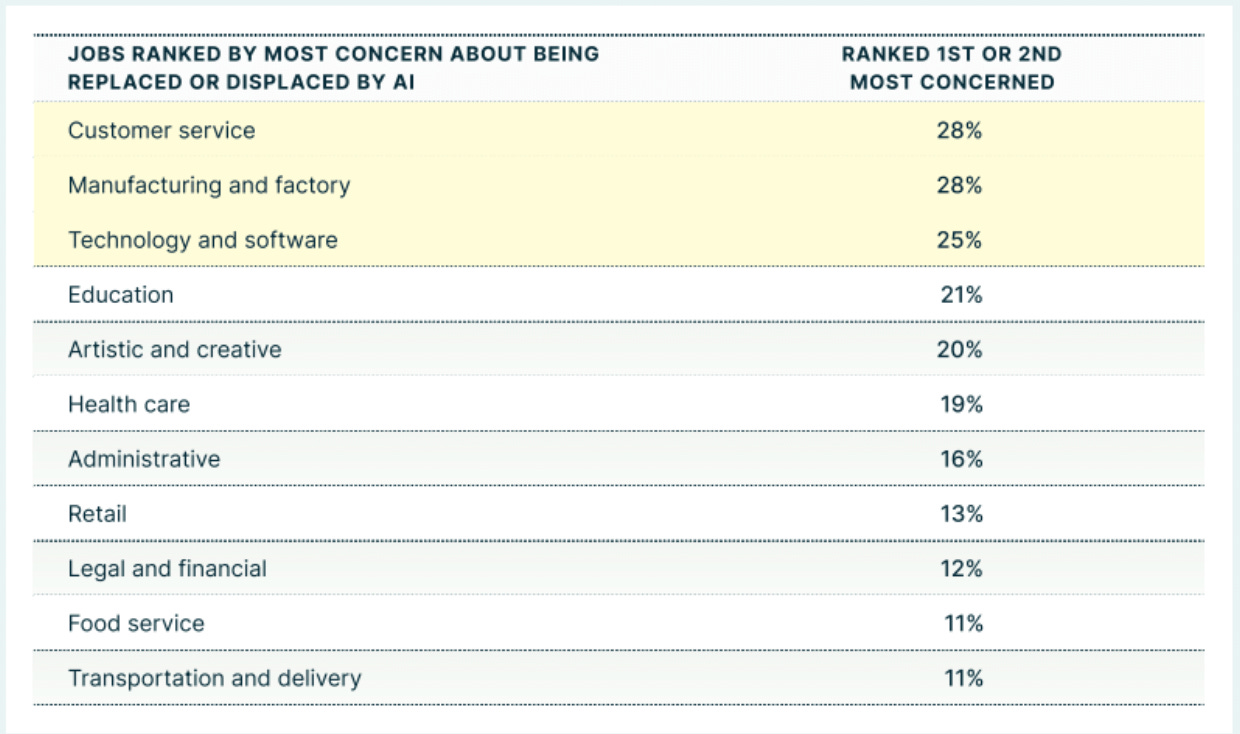

The specific job concerns span multiple sectors. Customer service roles top the worry list at 28%, alongside technology jobs (25%) and manufacturing (28%). Even creative fields register 20% concern.

One professor in our focus groups captured the tension: “I would be concerned about job loss for my specific job 5 to 10 years out. At some point I’m expecting in 5 to 10 years that AI might be publishing well-done peer-reviewed research, and I think that’s when I start to get nervous.” Five to ten years is close enough to feel real, far enough away that enthusiasm still outweighs anxiety – creating space for thoughtful governance.

What AI Means for How Children Learn

The scope of AI use in education is already striking: 56% of students use it for writing or editing assignments, 55% for solving math and science problems, and 55% for summarizing readings. This represents a fundamental shift in how students approach their work. “How would they even know if you just ChatGPT the whole essay?” one California parent asked, capturing the detection challenge that educators face daily. But some teachers are already adapting to this new reality. As one participant explained, describing a friend who teaches eighth grade: “He’s trying to tell them, ‘Hey, look, you’re going to use this tool for the rest of your life probably, but you have to understand why you’re using it and how to use it.’”

Overall, parents and teachers see AI’s utility – the homework help, the tutoring, the accessibility – while wrestling with deeper questions about learning and development. “What happens when our kids get older and suddenly, they’ve gone to school and they really don’t know anything or don’t know any life skills?” one participant asked. They’re not debating whether kids will use AI – they acknowledge that’s already happening – but rather, what skills matter, when AI can handle so many cognitive tasks traditionally taught in the classroom.

What Americans Agree On

In an era of deep political polarization, here’s what stands out in our data: Americans broadly agree on the need for AI guardrails.

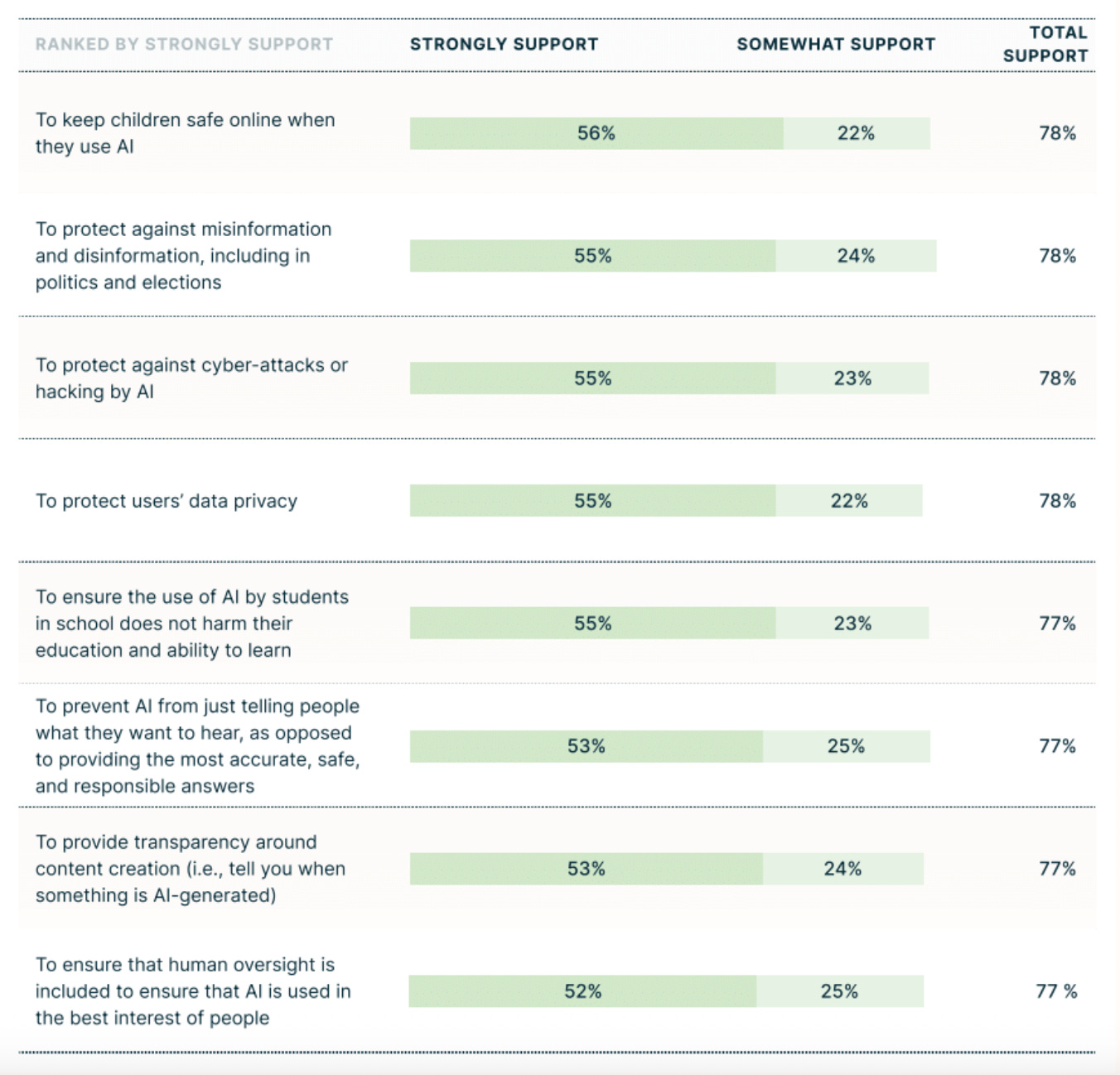

More than 75% support establishing protections around children’s safety online, combating misinformation in politics and elections, preventing cyber-attacks, protecting data privacy, ensuring AI use in education doesn’t harm learning, and maintaining human oversight. These are substantial demands that command supermajority support across party lines.

The consensus extends to economic protections: over 70% support both protecting jobs that could be affected by AI and providing economic support for displaced workers. The enthusiasm for AI’s benefits coexists with a desire for guardrails, not as contradictions but as complementary responses to the same technology.

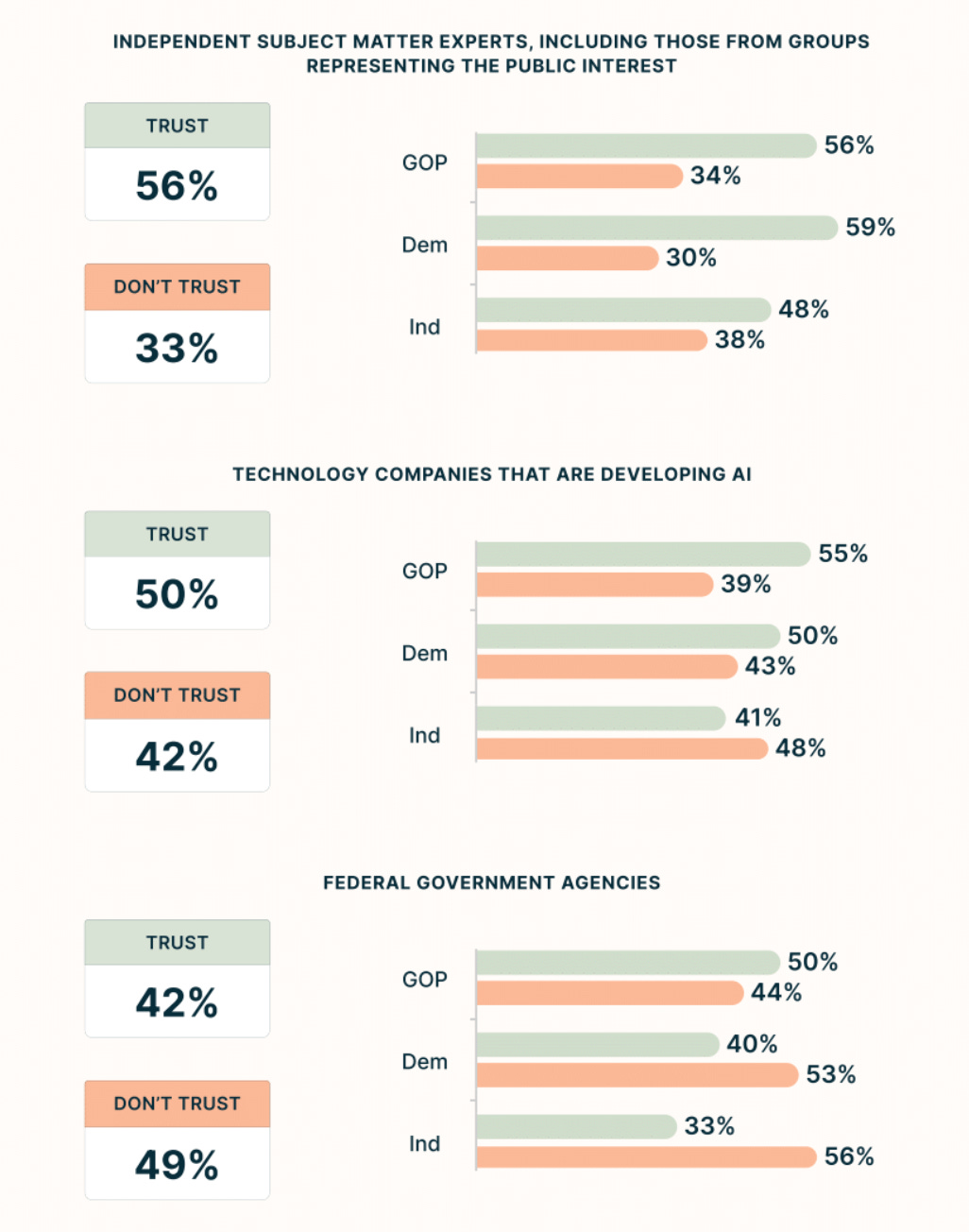

And here’s what makes this consensus particularly valuable: Americans also seem to agree on who they trust – and who they do not trust – to implement those guardrails.

Only 42% trust the federal government to regulate AI effectively. Just 50% trust the technology companies building it. And only 43% trust the two working together on AI governance.

Focus group participants were clear about why: “The government first needs to educate themselves, like our legislators aren’t AI experts,” one California Democrat explained. An Ohio Independent was blunter about government capacity: “There’s a lack of ability to get things done, it seems like, in the federal government. They’re very slow.”

The only group that crosses the majority threshold is independent subject matter experts and public interest groups, at 56% – and that support holds across party lines.

Why Ambivalence Creates Opportunity

Our research and convening efforts suggest that American ambivalence about AI isn’t an outlier – it’s a feature of democratic society processing transformative change. The strong bipartisan consensus around both the need for guardrails and trust in independent experts points toward a clear opportunity: governance innovation that keeps pace with technological innovation.

This is the moment for different models of governance – and specifically, for expert-led frameworks of independent oversight that can evolve as quickly as the technology itself. Such an approach could provide the accountability and better outcomes Americans seek without the rigidity of traditional regulation or the conflicts of interest created by pure self-regulation.

Reading the Room on AI

Three years after ChatGPT came into our lives, Americans are rapidly adopting technology while remaining thoughtful about its implications. What we’re missing isn’t public consensus about what to do next – that exists across party lines. It’s the institutional infrastructure to act on it, and political will to seize this bipartisan alignment.

If we can build frameworks that are expert-led, adaptive, and accountable, we can foster a safer, more trusted AI future. That’s good for Americans and for our innovation and tech leadership in the world.

The ambivalence in our data isn’t indecision. It’s an invitation for policymakers to act.

For the full findings, read our complete report. To schedule a briefing, please contact us here.